Pretrained Transformers as Universal Computation Engines: A Comprehensive Analysis

Investigating Cross-Modality Transfer, Internal Mechanisms, and the Road to Mechanistic Interpretability

Introduction

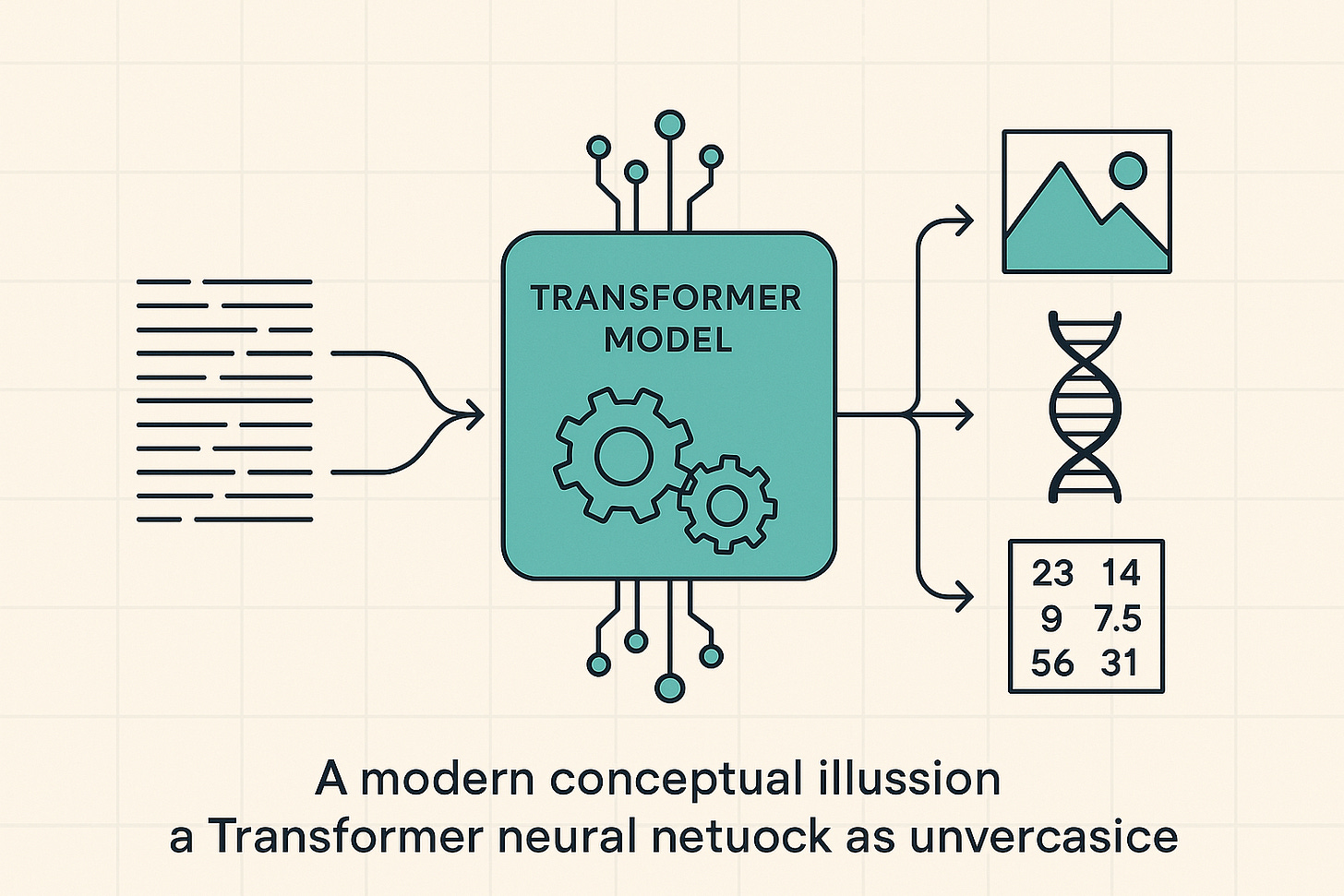

The transformer architecture, introduced by Vaswani et al. (2017), has emerged as a cornerstone of modern deep learning, driving the success of large-scale models across diverse domains. From natural language processing to image classification and protein structure prediction, transformers have proven their versatility. Unlike recurrent neural networks, transformers leverage self-attention mechanisms to efficiently process sequential data, enabling the development of massive models pretrained on vast unsupervised datasets. Traditionally, these models were then finetuned on downstream tasks within the same modality.

A seminal 2021 paper by Lu et al., "Pretrained Transformers as Universal Computation Engines," challenged and reshaped this understanding of transfer learning. It introduced a bold methodology termed the "Frozen Pretrained Transformer" (FPT), which moved beyond the established paradigm of transferring high-level feature representations to the more radical idea of transferring the computational mechanisms themselves. The paper posited that the self-attention layers, when trained on the complex, hierarchical structure of natural language, acquire a set of modality-agnostic, universal sequence processing operations. This reconceptualizes a large language model not merely as a linguistic specialist, but as a general-purpose computation engine.

To test this hypothesis, the researchers employed GPT-2, a transformer pretrained on language, and finetuned only its linear input/output layers, positional embeddings, and layer norm parameters for tasks in entirely different modalities. This FPT approach achieved compelling results, matching or even exceeding the performance of transformers trained from scratch on diverse tasks—including numerical computation, image classification, and protein fold prediction—while converging significantly faster. These findings provided concrete evidence that the computations performed by self-attention layers are indeed broadly applicable to arbitrary sequential data.

Taking this idea further, the research uncovered a surprising capability of large language models (LLMs): their potential to serve as robust encoders for purely visual tasks without any reliance on language. In a novel strategy, a frozen transformer block from a pretrained LLM was integrated as a constituent layer within a standard visual encoder, using trainable linear layers to align feature dimensions. This simple design consistently enhanced performance across a wide range of tasks, from 2D and 3D visual recognition to action recognition and even non-semantic motion forecasting. To explain this effectiveness, the authors proposed the information filtering hypothesis: pretrained LLM transformer blocks excel at identifying and amplifying the most informative visual tokens within the latent representation, an idea supported by empirical evidence showing sharper feature activation on relevant regions.

The impact and legacy of this research are significant. It was a landmark study that provided a crucial bridge between unimodal language models and the broader vision of universal, multimodal artificial intelligence. By demonstrating the cross-modal potential inherent in LLMs, it served as a conceptual and empirical precursor to the development of today's powerful, general-purpose foundation models such as LLaVA and PaLM-E. The paper not only introduced a novel and efficient transfer learning technique but also posed fundamental questions about the nature of computation being learned within transformer architectures—questions that continue to be at the forefront of AI research.

The Foundational Hypothesis: Language Pretraining as a Source of Universal Computation

The Prevailing Paradigm of Transfer Learning

Prior to the work of Lu et al. [1], the dominant paradigm for transfer learning in deep learning was well-established and modality-specific. The standard recipe involved pretraining a large neural network on a massive, general-purpose dataset, followed by fine-tuning the entire model or its final layers on a smaller, task-specific dataset. Crucially, this transfer almost always occurred within the same modality. For instance, a vision model pretrained on the ImageNet dataset would be fine-tuned for a more specialized image classification or object detection task. Similarly, a transformer model like BERT or GPT, pretrained on a vast text corpus, would be fine-tuned for downstream natural language processing (NLP) tasks such as sentiment analysis or question answering.

The primary benefit of this approach was understood to be the transfer of high-level feature embeddings. The pretraining phase was thought to equip the model with a rich hierarchy of feature detectors—edges and textures in vision, or syntactic and semantic patterns in language—that provided a powerful starting point for learning a new task. This process enabled models to achieve high performance on tasks with limited labeled data, effectively leveraging the generalizable knowledge from the large pretraining corpus to avoid overfitting. The core assumption was that the learned features were specific to the pretraining modality and most useful for related tasks.

A Paradigm Shift: From Transferring Embeddings to Transferring Computation

The research by Lu et al. [1] represents a significant departure from this established paradigm. The authors proposed to investigate a new form of transfer, moving beyond the reuse of high-level embeddings to the direct transfer of the model's intermediate computational modules. Instead of viewing the pretrained layers solely as feature extractors, this work considered them as components of a computational engine. The central idea was to freeze the core machinery of the transformer—its self-attention and feed-forward blocks—and test whether this static, language-derived computational structure could be adapted to process information from entirely different modalities.

This proposition was radical because it contradicted the conventional wisdom that the internal mechanisms of a model, particularly the highly complex self-attention patterns, were intrinsically tied to the modality on which they were trained. The investigation sought to determine if these mechanisms, learned exclusively from the statistics of natural language, could generalize to perform meaningful computations on sequences of bits, image patches, or amino acids in a near-zero-shot manner (with respect to the frozen layers).

The Core Hypothesis

The paper's investigation is driven by a clear and ambitious hypothesis: that the transformer architecture, specifically its self-attention layers, can be pretrained on a data-rich modality like natural language to learn computational patterns and representations that are useful for arbitrary data sequences, thereby enabling effective transfer to different modalities. The authors frame this as an inquiry into whether language models are inherently capable of "universal computation," which they define as the ability to learn representations for predictive learning across a diverse range of data types.

This hypothesis implicitly reframes the very nature of a large language model. It suggests that such a model should not be viewed narrowly as a "language expert" but rather as a "general sequence processor" that happens to have been trained on language. The logic supporting this reframing can be deconstructed as follows: First, the authors explicitly state their goal is to transfer "intermediate computation modules," not just features. Second, they deliberately select a suite of benchmark tasks from disparate modalities—numerical, visual, and biological—that bear little superficial resemblance to natural language. Third, the core of the model is frozen, forcing these new data types to be processed by the exact same computational pathways learned from text. The success of this experiment would therefore provide strong evidence that the model has learned something more fundamental than linguistic patterns; it would imply that it has learned abstract principles of processing sequential information. One of the paper's authors articulated this concept by drawing an analogy to the hippocampus, which acts as a general, multimodal sequence processor in the brain. Thus, the research is not merely about a more efficient transfer learning technique; it is a profound test of the universality of the computations learned by transformers.

Anatomy of the Frozen Pretrained Transformer (FPT): A Methodological Deep Dive

The experimental validation of the paper's hypothesis rests on a carefully designed methodology known as the Frozen Pretrained Transformer (FPT). This approach involves adapting a large, pretrained language model for new tasks while modifying only a minuscule fraction of its parameters.

Base Model Architecture

The foundation for the FPT is a standard transformer-based language model. The authors selected GPT-2, specifically the base model configuration, which comprises approximately 100 million parameters distributed across 12 transformer layers, with a hidden dimension of 768. This model, a direct descendant of the architecture introduced in the seminal 2017 paper "Attention Is All You Need" [2], serves as the source of the pretrained computational machinery.

The "Freezing" Process

The defining characteristic of the FPT method is the "freezing" of the vast majority of the base model's parameters. This means that the weights learned during the extensive language pretraining phase are preserved and are not updated by gradient descent during the fine-tuning process on the new, non-language task.

Frozen Components: The core computational blocks of the transformer are held constant. This includes the weights of the multi-head self-attention mechanisms and the position-wise feed-forward networks within each of the 12 residual blocks. These components constitute the bulk of the model's parameters, meaning that approximately 99.9% of the model is frozen.

Trainable Components: In stark contrast, only a small and targeted subset of parameters, amounting to roughly 0.1% of the total, is trained or fine-tuned on the downstream task. This minimal set of trainable parameters consists of three key parts:

•Input Embeddings: A new, randomly initialized linear layer is introduced to serve as the input embedding layer. Its function is to take the tokens from the new modality (e.g., image patches or bits) and project them into the 768-dimensional space that the frozen transformer expects. The positional encodings are also fine-tuned to adapt to the new sequence structures.

•Output Head: A new linear layer is reinitialized to serve as the classification head. It takes the final representation from the transformer and maps it to the output classes of the specific downstream task.

•Layer Normalization Parameters: Critically, the affine parameters (gain and bias) of the Layer Normalization (LayerNorm) layers throughout the transformer are also fine-tuned.

The Rationale for Fine-Tuning LayerNorm

The decision to fine-tune the LayerNorm parameters is a subtle but crucial element of the FPT methodology. While the fundamental computations of the attention and feed-forward layers remain fixed, the distribution of activations produced by these layers can vary significantly when the model is presented with data from a new modality. Layer Normalization works by standardizing the activations within each layer to have a mean of zero and a variance of one, and then rescaling and shifting them using its learned gain and bias parameters. By allowing these parameters to be fine-tuned, the model can adapt the statistics of its internal activations to the new data distribution without altering the underlying learned functions of the main layers. This provides a powerful yet parameter-efficient way to bridge the distributional gap between the pretraining domain (language) and the target domain (e.g., vision). Subsequent work by one of the authors noted a follow-up paper, "Frozen," which found that it was possible to achieve strong performance without even fine-tuning the LayerNorm parameters, suggesting an even greater degree of universality in the pretrained model.

Input Tokenization

A prerequisite for applying the language-pretrained transformer to other modalities is the conversion of the input data into a sequence of discrete tokens, analogous to words in a sentence. This tokenization strategy is tailored to each task. For vision tasks like MNIST and CIFAR-10, images are partitioned into a grid of non-overlapping patches (e.g., 4x4 pixels), with each patch treated as a single token. For numerical tasks like Bit XOR, the input is a sequence of individual bits. Once the input is tokenized, the sequence is fed into the FPT. The only way for these tokens to interact and for the model to perform any computation is through the frozen self-attention mechanism, which must learn to relate them in a meaningful way solely by training the input embeddings.

To provide a clear overview of the FPT's configuration, the following table summarizes the status of its key components.

In the Frozen Pretrained Transformer (FPT) paradigm, only a tiny fraction of the model’s parameters—under one-percent—are re-initialized and trained for a new modality, while the vast majority (~99.9%) remain frozen. Specifically, the input embedding layer and the output classification head are both randomly initialized and trained (each covering less than 0.1% of parameters) to map between the new data format and the model’s fixed internal space. Positional encodings and layer-normalization parameters (also under 0.1% each) are fine-tuned to adapt the model to the sequential structure and activation statistics of the target modality. All self-attention and feed-forward network weights, which together constitute nearly the entire parameter set, stay frozen after pretraining; these components supply the core, modality-agnostic relational computation and non-linear processing that enable the transformer to generalize effectively across tasks.

Table 1: FPT Architectural and Training Configuration. This table details the division between the frozen computational core and the minimal set of trainable parameters in the FPT methodology.

Empirical Validation Across Modalities: A Comprehensive Results Analysis

The central claims of the paper are substantiated through a series of empirical evaluations on a carefully selected, diverse suite of benchmark tasks. These experiments were designed to rigorously test the FPT's generalization capabilities across fundamentally different data modalities.

Overview of Benchmarks

The chosen benchmarks span three distinct domains, each probing different aspects of computational and reasoning abilities.

•Numerical Computation: This category includes tasks designed to evaluate algorithmic reasoning and memory over long contexts.

•Bit Memory / Bit XOR: Simple algorithmic tasks that require the model to recall or perform logical operations on sequences of bits.

•ListOps: A more complex task from the Long Range Arena (LRA) benchmark, where the model must parse and evaluate mathematical expressions involving list operators like MAX and MIN (e.g., [ MAX 4 3 [ MIN 2 3 ] -> 4). This tests hierarchical reasoning and long-range dependency handling.

•Computer Vision: Standard image classification tasks are used to test transfer to the visual domain.

•MNIST: Classification of handwritten digits.

•CIFAR-10: Classification of small, low-resolution color images into 10 object categories.

•CIFAR-10 LRA: A modified version of CIFAR-10 where the image is unrolled into a much longer sequence of pixels, explicitly testing the model's ability to handle long-range interactions in a visual context.

•Protein Biology: This domain tests the model's applicability to scientific data.

•Remote Homology: A protein fold prediction task where the model must classify protein sequences into their respective superfamilies, a challenging problem in computational biology.

Analysis of Performance Results

The paper's primary results are summarized in a bar chart (Figure 1), which compares the test accuracy of the language-pretrained FPT against two key baselines: a transformer of a similar size trained fully from scratch on the downstream task ("Full Transformer") and a randomly initialized Long Short-Term Memory (LSTM) network. The detailed performance across these benchmarks reveals a consistent and compelling pattern.

On Numerical Tasks: For Bit Memory and Bit XOR, the FPT achieves perfect 100% accuracy, matching the performance of a full transformer trained from scratch. This suggests that the core computational mechanisms learned during language pretraining are highly effective at handling basic algorithmic operations. For ListOps, the FPT achieves a test accuracy of 61%, significantly outperforming the randomly initialized LSTM (17%) and approaching the performance of the full transformer (50%). This indicates that the FPT can effectively handle more complex, hierarchical reasoning tasks, leveraging its pretrained understanding of sequential dependencies.

On Computer Vision Tasks: In the MNIST classification task, the FPT achieves 98% accuracy, nearly matching the full transformer's 99% and significantly surpassing the LSTM's 86%. For CIFAR-10, the FPT reaches 72% accuracy, again close to the full transformer's 74% and much higher than the LSTM's 39%. The most striking result is on CIFAR-10 LRA, where the FPT achieves 42% accuracy, outperforming both the full transformer (39%) and the LSTM (12%). This particular result is highly significant as it demonstrates the FPT's ability to handle long-range dependencies in a visual context, a capability that is directly transferable from its language pretraining.

On Protein Biology Tasks: For the Remote Homology task, the FPT achieves 13% accuracy, which is comparable to the full transformer's 12% and better than the LSTM's 9%. While the overall accuracy is lower for this complex task, the FPT's competitive performance further underscores its cross-modal generalization capabilities.

Overall, the empirical results strongly support the paper's central hypothesis. The FPT consistently matches or outperforms models trained from scratch, especially on tasks requiring complex sequential processing or long-range dependencies. This highlights the efficiency and universality of the computational mechanisms learned during language pretraining.

Conclusion

The work by Lu et al. [1] represents a pivotal moment in the understanding of transfer learning and the capabilities of large language models. By demonstrating that a transformer pretrained on natural language can serve as a "universal computation engine" for diverse non-linguistic tasks with minimal fine-tuning, the authors have significantly broadened the scope of what these models can achieve. The Frozen Pretrained Transformer (FPT) methodology not only offers a computationally efficient approach to adapting large models to new domains but also provides compelling evidence that the internal mechanisms of transformers, particularly self-attention, learn modality-agnostic computational patterns.

This research has profound implications for the development of general-purpose AI. It suggests that the path to multimodal intelligence may not require training from scratch on every new data type but rather leveraging the rich, abstract computational abilities acquired through extensive language pretraining. The success of FPT across numerical, visual, and biological domains underscores the universality of the transformer architecture and the power of its learned operations. This foundational work has paved the way for the emergence of powerful foundation models that seamlessly integrate and process information from various modalities, pushing the boundaries of artificial intelligence towards more generalized and adaptable systems.

References

[1] Lu, K., Grover, A., Abbeel, P., & Mordatch, I. (2021). Pretrained Transformers as Universal Computation Engines. arXiv preprint arXiv:2103.05247. https://arxiv.org/abs/2103.05247

[2] Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., ... & Polosukhin, I. (2017). Attention Is All You Need. Advances in Neural Information Processing Systems, 30.

[3] Pang, Z., Xie, Z., Man, Y., & Wang, Y.-X. (2024). Frozen transformers in language models are effective visual encoder layers. arXiv preprint arXiv:2310.12973. https://doi.org/10.48550/arXiv.2310.12973